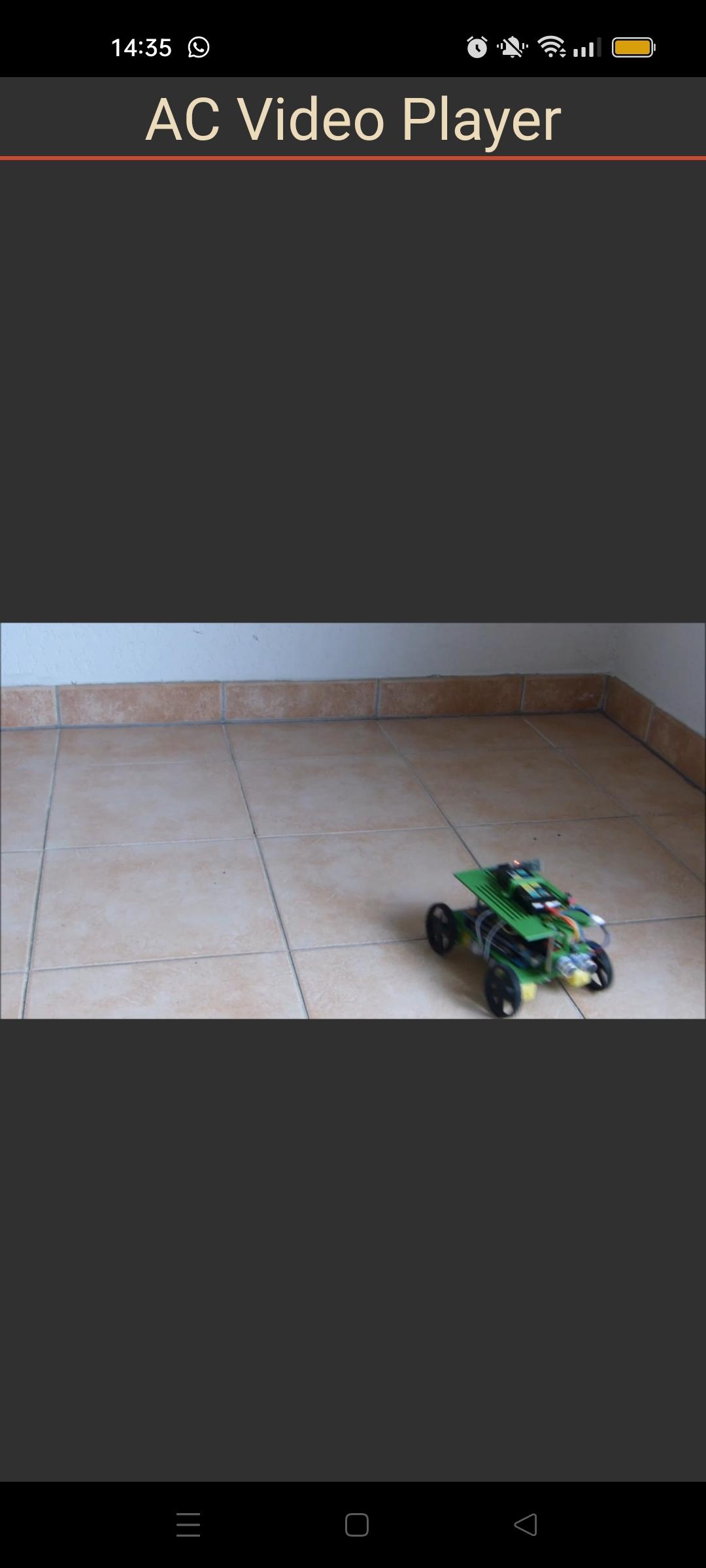

In this tutorial, we’ll look at how to integrate video into a React Native application. To do this, we’ll create a video stream from a computer and retrieve the video signal from the Android device.

React Native project configuration

To integrate a video into the application, we use the react-native-video library, which can play local and remote videos.

To add the library to your React Native project, enter the following command

npm install --save react-native-video

N.B.: you can also use the react-native-live-stream library

Video component main code

To use the library, we start by importing it into the main code along with the other necessary packages

import React from 'react'

import { View, StyleSheet } from 'react-native'

import Video from 'react-native-video';

Local video playback

source={require(<PATH_TO_VIDEO>)}

To play a remote video

source={{uri: '<VIDEO_REMOTE_URL>'}}

/**

* https://www.npmjs.com/package/react-native-live-stream

* https://github.com/react-native-video/react-native-video

* https://www.npmjs.com/package/react-native-video

*/

import React, { useEffect } from 'react'

import { Text, View, StyleSheet } from 'react-native'

import Video from 'react-native-video';

const App = () => {

const videoRef = React.createRef();

useEffect(() => {

// videoRef.current.

//console.log(videoRef.current);

}, []);

return (

<View style={{ flexGrow: 1, flex: 1 }}>

<Text style={styles.mainTitle}>AC Video Player</Text>

<View style={{flex: 1 }}>

<Video

//source={require("PATH_TO_FILE")} //local file

source={{uri: 'https://www.aranacorp.com/wp-content/uploads/rovy-avoiding-obstacles.mp4'}} //distant file

//source={{uri: "http://192.168.1.70:8554"}}

ref={videoRef} // Store reference

repeat={true}

//resizeMode={"cover"}

onLoadStart={(data) => {

console.log(data);

}}

onError={(err) => {

console.error(err);

}}

onSeek={(data) => {

console.log(`seeked data `, data);

}}

onBuffer={(data) => {

console.log('buffer data is ', data);

}} // Callback when video cannot be loaded

style={styles.backgroundVideo} />

</View>

</View>

)

}

export default App;

let BACKGROUND_COLOR = "#161616"; //191A19

let BUTTON_COLOR = "#346751"; //1E5128

let ERROR_COLOR = "#C84B31"; //4E9F3D

let TEXT_COLOR = "#ECDBBA"; //D8E9A8

var styles = StyleSheet.create({

mainTitle:{

color: TEXT_COLOR,

fontSize: 30,

textAlign: 'center',

borderBottomWidth: 2,

borderBottomColor: ERROR_COLOR,

},

backgroundVideo: {

position: 'absolute',

top: 0,

left: 0,

bottom: 0,

right: 0,

},

});

Setting up video streaming with ffmpeg

To test our application, we use the ffmpeg tool to create a video stream from a computer connected to the same wifi network as the device. The computer plays the role of sender (server) and the application plays the role of receiver (client).

We’ll get the server’s IP address (ipconfig or ifconfig), here 192.168.1.70, and send the video stream on port 8554.

- linux server side

ffmpeg -re -f v4l2 -i /dev/video0 -r 10 -f mpegts http://192.168.1.70:8554

- Windows server side

ffmpeg -f dshow -rtbufsize 700M -i video="USB2.0 HD UVC WebCam" -listen 1 -f mpegts -codec:v mpeg1video -s 640x480 -b:v 1000k -bf 0 http://192.168.1.70:8554

To play the video stream on the client side, we change the video source address to specify

- HTTP protocol

- server IP address 192.168.1.70

- the port used 8554

source={{uri: "http://192.168.1.70:8554"}}

N.B.: Don’t forget to set the repeat option to false (repeat={false}).